Bad code is contagious

How imitation machines turn local mistakes into system-wide conventions

Bad code has always spread through codebases by imitation. AI just turned a slow cultural process into an epidemic.

When a new developer joins a project, they learn what code should look like by copying what’s already there. Style guides are usually out of date or aspirational. ADRs capture decisions but not necessarily reality. The only reliable signal is the code itself, and “when in Rome” is a sensible default.

The problem is that the default propagates whatever is already there, good or bad. Point an imitation machine at a corpus of imperfect code and you get propagation and compounding. Just like a virus.

Why code conventions spread like a virus

In small teams, conventions spread deliberately. Someone makes a call, writes it down1, mentions it in review, and the team mostly follows.

At scale, that breaks down. Most developers learn conventions less from documentation than from neighbouring code. The local environment becomes the fitness landscape, and “when in Rome” becomes the replication mechanism.

AI agents just accelerate this process.

A short primer on SIR

The SIR model divides a population into three states: Susceptible, Infected, Recovered. Within the model there are three important numbers.

Transmission probability - when an infected individual contacts a susceptible one, this is the chance the pathogen passes across

Contact rate - how often infected individuals contact susceptibles in the first place.

Recovery rate - how quickly infected individuals stop being infected.

The product of these (adjusted for the average number of contacts per individual) gives you R0, the basic reproduction number. It is the number of new infections caused, on average, by a single infected individual in a fully susceptible population. As you will remember from the COVID era, if R0 is below one2, the outbreak dies out. If R0 is above one, it grows.

So, lets stretch this model across to software.

Transmission probability is now how convincing a bad pattern looks when an agent reads it. A well-named, well-structured and well-commented bad pattern transmits at a much higher rate than a scruffy one. This is part of why AI-generated code is so dangerous, it looks just as (if not more so) plausible than human-written code.

Contact rate is how aggressively agent sessions sample neighbouring files. Big context windows and tools that pull in all relevant files, and prompts that say things like “follow the existing patterns in this repo” all increase the contact rate.

Recovery rate is how quickly you refactor, review or remove the pattern. This is entirely a function of your engineering practices. You get to control this one!

The R0 threshold

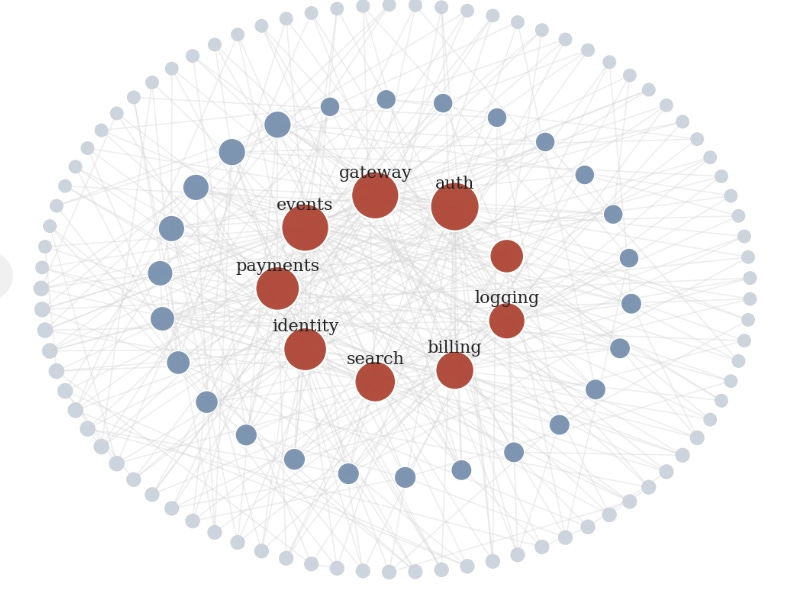

For the first example, let’s pretend we have the architecture below. There are roughly 120 components. There are some foundational services in the middle that almost everything else uses, but most of the classes only use a handful of other classes.

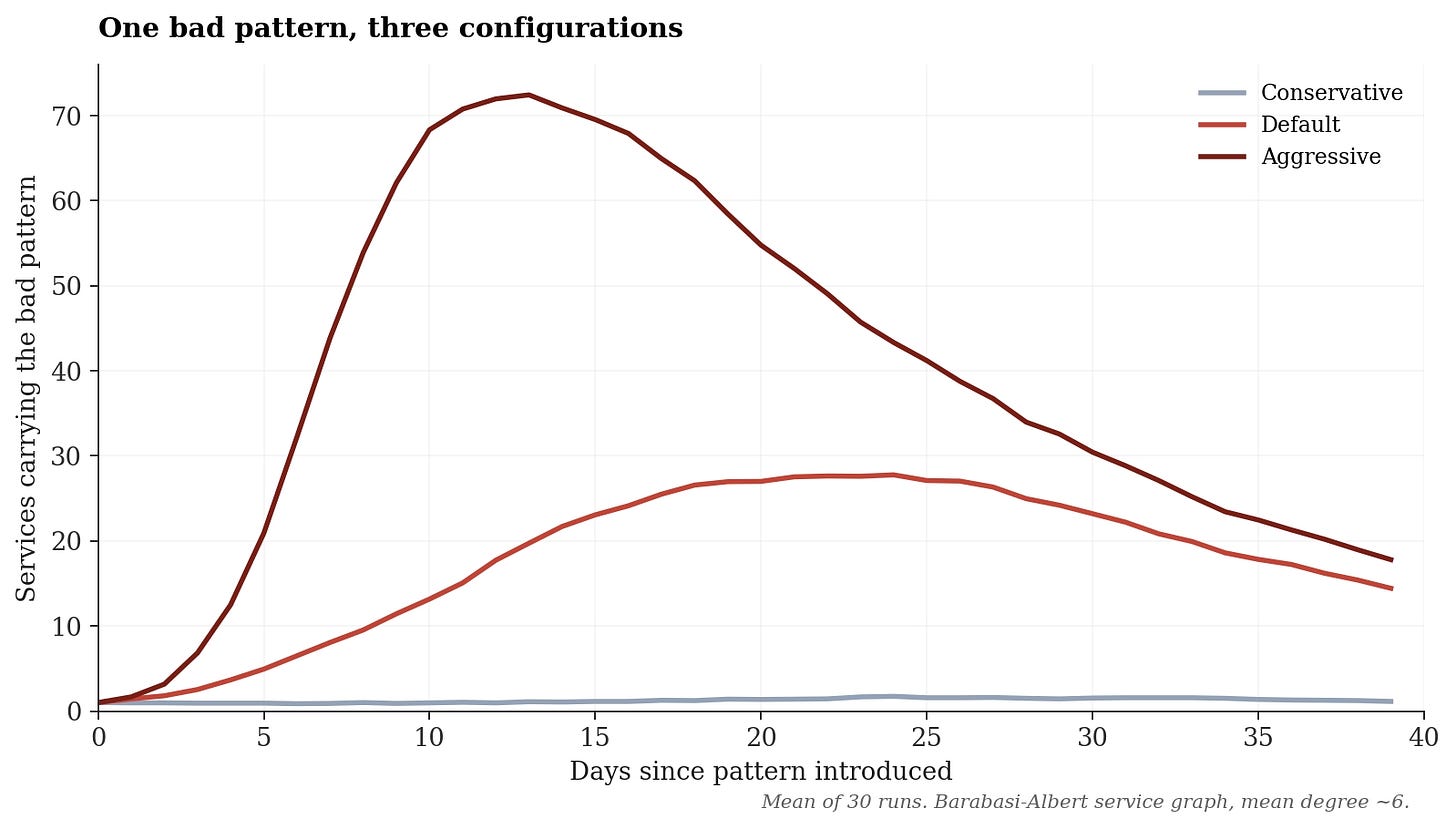

We will try to simulate three different approaches. We will imagine the virus to be AI-generated code and simulate three different styles of usage.

Conservative - In this example, think mostly using AI within files with tight prompts and narrow context. In this usage of AI, code patterns spread slow enough that recovery can keep pace. R0 in this case is around 1.

Default - Now we are imagining a more typical AI harness with no special constraints. AI pulls in patterns as needed through the harness and uses much of the context window. R0 is about five in this case. The pattern does not burn through every component, but it is sticky enough that you’ve now got roughly a quarter of your components carrying a wrong pattern, and it is hard to remove since it has prior art on its side.

Aggressive - wide context windows, tools that eagerly sample neighbours, prompts that tell the agent to follow existing patterns can push R0 up to fifteen. This is bad. By day thirteen, the contaminated pattern is the dominant one.

The important thing about R0 is that it behaves like a threshold, not a linear dial. Below one, the pattern struggles to sustain itself. Above one, it starts finding chains of transmission. The difference between 0.9 and 1.1 is not a rounding error. It is the difference between local noise and systemic spread.

Vaccinating your code base.

With the industry drive being to AI all the things, R0 is mostly out of collective hands. You might be able to constrain agent configuration at the margin, but the industry trajectory is towards AI all the things. Now the question becomes, what can you do about the population itself?

The epidemiological answer is vaccination. You do not need to protect everyone, but you need to protect enough of the population that the average infected individual encounters fewer than one susceptible contact. If you can achieve that, then the effective R drops below one and the outbreak dies even though R0 in the unprotected subpopulation is still high. This is herd immunity, and it is one of the most counter-intuitive and important results in the field.

The software analogue to a vaccine is architectural. A vaccinated component is one where the bad pattern cannot take hold. A vaccine could be a lint-rule, an automated test, a schema contract or even a golden-path scaffold that wins because the agent reads it first. Vaccinated services are immune not because they are better written, but because the environment around them actively refuses the contaminated pattern.

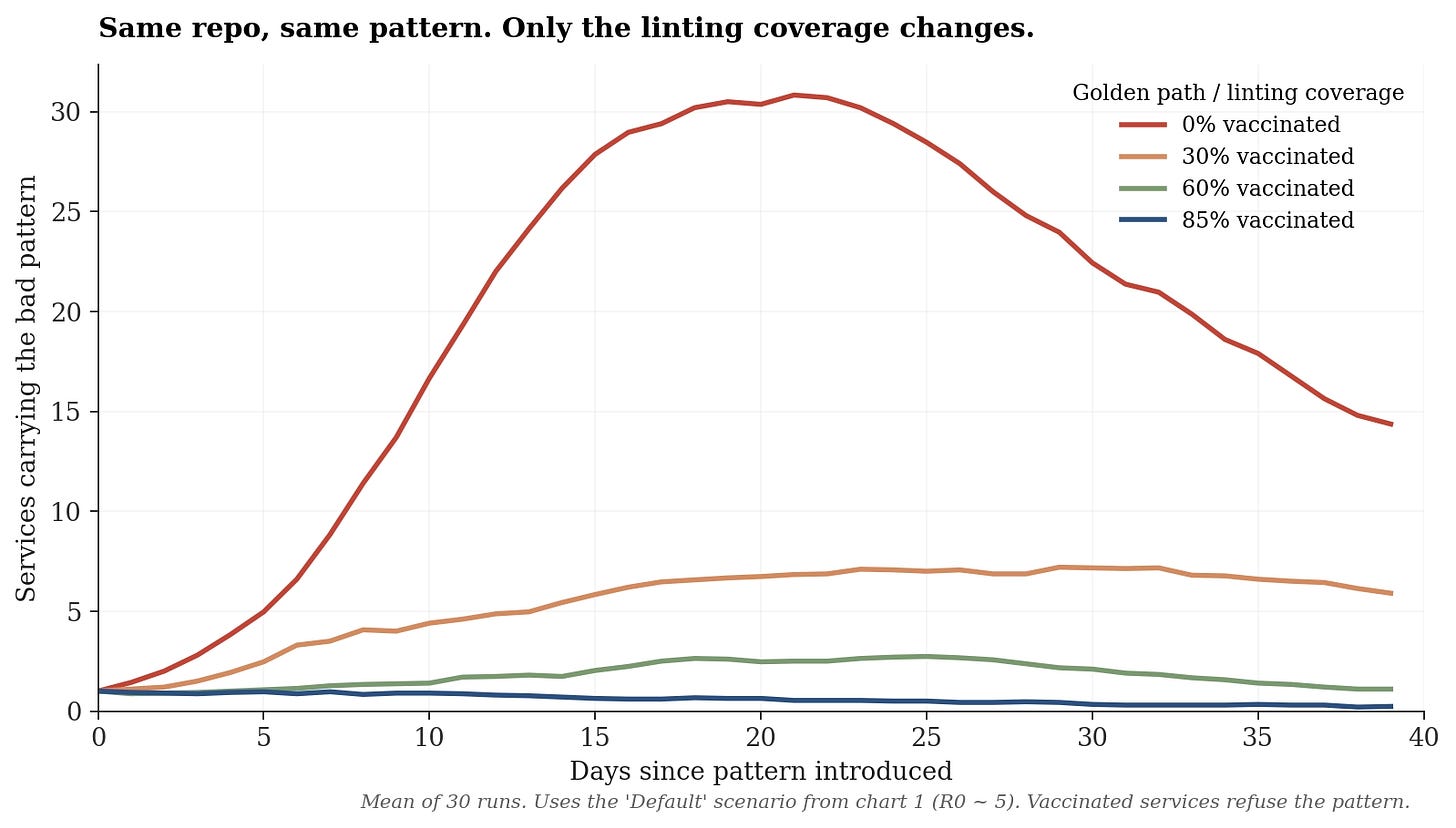

In the next chart, we will use the Default scenario and now vary which fraction of services have vaccination applied.

You might be thinking, surely if I write the correct rule, I can vaccinate 100%? Well, if that were true then you’d be able to write an oracle to say if something was good or bad and I think that’s too hard for me! I prefer to look at it from the other direction. If I can write a fuzzy rule that rejects some percentage of bad code, then the pattern stops spreading.

At zero coverage the outbreak peaks around thirty services. At thirty percent coverage the peak collapses by three-quarters to about seven. At sixty percent it is essentially extinguished - you get small, transient flare-ups that recover on their own. At eighty-five percent the pattern cannot get going at all.

This matters for engineering decisions because the intuitive model says, “we will roll out linting progressively, starting with the most critical services”. The epidemiological model says something different: the value of linting is strongly non-linear in coverage, and you do not get real protection until you are above the herd-immunity threshold. A thirty percent rollout does not deliver thirty percent of the benefit. In this simulation, it removes roughly seventy-five percent of the peak outbreak, because it breaks transmission chains

Vaccinate your codebase!

If this analogy holds, then the interventions we should make to stop bad patterns spreading are:

Golden paths are vaccination - Every component that uses a golden path is a vaccinated member of the population. Golden paths will get copied by the AI. Herd immunity appears when enough of the codebase uses them.

Linting rules / Architectural fitness functions are barriers at the point of contact (masks, hand-washing etc.) - They do not prevent a bad pattern being generated, but instead they catch it before it reaches a service. A good lint rule is one that blocks the wrong thing from being committed. It also does not have to be 100% effective.

Agent scope is the contact rate - An agent session that touches many files is a house-party! Smaller, more well-defined tasks aren’t just about code quality, they reduce R0 directly.

Refactoring is treatment - This is the recovery rate. It is the one parameter you can raise directly and it’s the one most often neglected. “We will refactor when we have time” is the epidemiological equivalent of “we will treat the sick when we have time”. You can raise recovery rate by funding platform teams, protecting time for it or by making it cheap enough to happen inside ordinary work.

Observability is surveillance - You can’t respond to an outbreak you cannot see. Tools that scan for pattern proliferation (duplicated logic, drift from golden paths) are the codebase equivalent of contact tracing.

Engineering leaders spend a lot of time thinking about how to produce more code. The more useful question is how to ensure that the code you produce does not contaminate the code you already had. Epidemiology has been thinking about that problem for two hundred years. The intervention that matters is raising the recovery rate and vaccinating enough of the population that bad patterns cannot find a chain to run through.

No-one really writes it down, but we all like to pretend that we do.

During COVID almost every night there would be a reporter trying to explain R0!