Cheap Code means more Governance

(or at least I think it does)

Every productivity breakthrough in history1 has created more oversight, not less. AI-generated code won’t be different.

Nobody would argue that AI can’t generate a lot of code! The marginal cost of producing code is heading to zero. But validating that code? That’s still expensive, still slow and still involves a human.

The instinct is to assume that this will sort itself out. That we’ll find some magic way to review faster, or that AI will advance such that code won’t need to be reviewed. History suggests otherwise; every major productivity breakthrough has generated more infrastructure for oversight.

When copying gets cheap, governance gets expensive

The Gutenberg press collapsed the cost of copying a book from months of scribal labour to hours of mechanical work. Within a century, Henry VIII had issued England’s first list of banned books, the Stationers’ Company had been granted a royal charter to act as censors, and the Catholic Church had published its Index Librorum Prohibitorum (only abolished in 1966!) Five hundred years of governance infrastructure, all because copying got cheap.

Spreadsheets democratised financial modelling in a way that nothing before them had managed. Anyone could build a model. Anyone could project revenue, calculate risk, forecast growth. The problem was that “anyone” included people (who make mistakes!), and people who manipulated numbers deliberately. Studies consistently find error rates of 20-90% in corporate spreadsheets. The problem got bad enough that when Excel started auto-converting gene names like SEPT1 and MARCH1 into dates, over 30% of published genomics papers ended up with corrupted data.

The tool was so good at making things easy that it was also excellent at making errors invisible.

On the fraud side, both Enron and WorldCom involved complex spreadsheet-enabled financial manipulation. Spreadsheets didn’t cause the fraud, but they made it dramatically easier to construct and harder to detect. The resulting Sarbanes-Oxley Act of 2002 created an entire apparatus of internal controls, CEO/CFO certification of financial statements, and independent audit requirements. SOx compliance became an industry in itself. Entire careers now exist that wouldn’t if financial modelling had stayed expensive and manual.

Cheap modelling didn’t reduce the need for oversight, instead it created a filtering problem that required a massive expansion of governance to address.

Why abundance always demands new filters

In 1971, Herbert Simon used a simple analogy: a rabbit-rich world is a lettuce-poor world. What’s abundant makes what it consumes scarce. His point was that a wealth of information creates a poverty of attention. An information-processing system only reduces net demand on attention if it absorbs more than it produces. Or as he put it, only if it listens and thinks more than it speaks.

AI code generators speak far more than they listen.

Clay Shirky sharpened this in 2008.

The problem is filter failure. Before the internet (and before cheap production generally), high production costs served as quality filters. When production costs collapse, the filtering disappears. The challenge is building better filters.

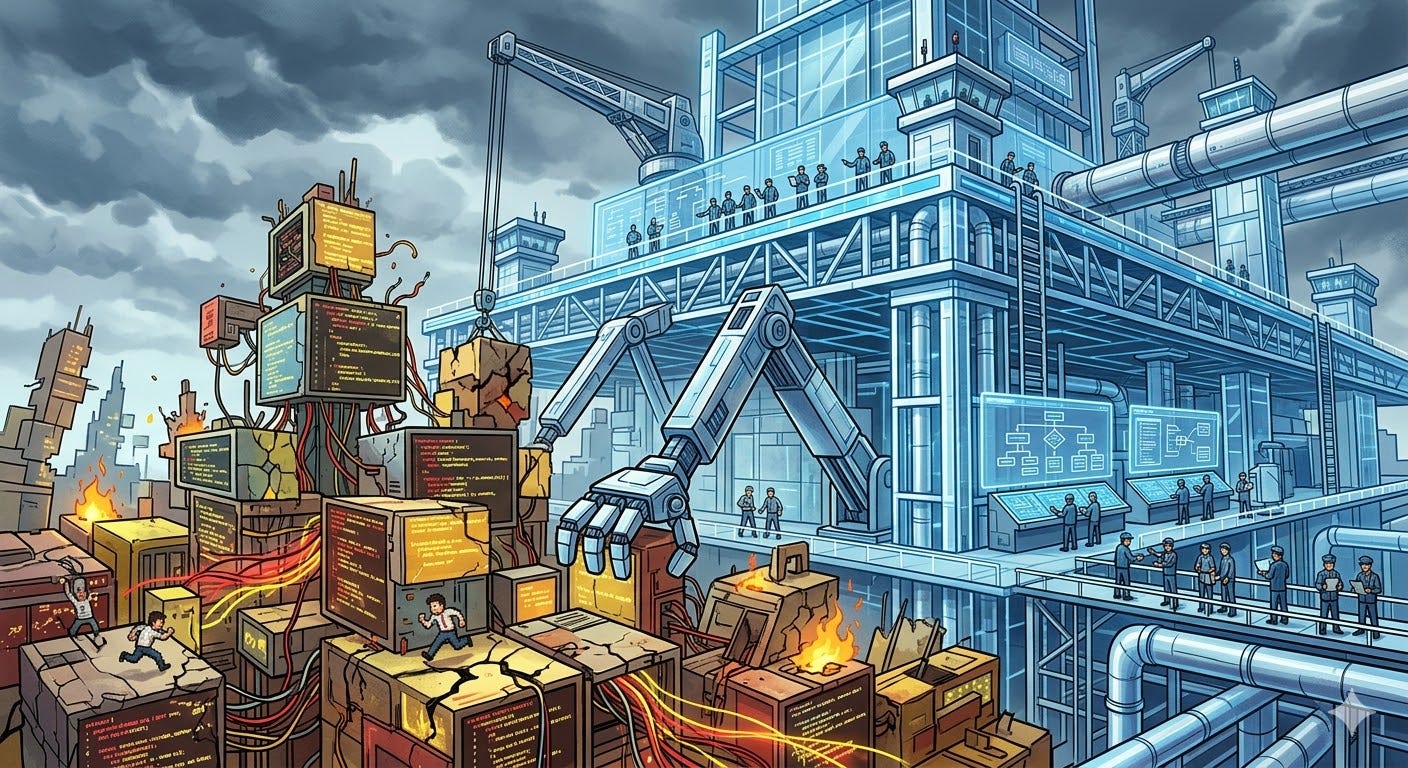

This maps to where we are with AI-generated code. The production cost has collapsed. The filtering mechanisms (code review, testing, security scanning, architectural review) haven’t scaled to match. We have a filter failure problem, and we’re going to solve it the same way every other industry has, with governance.

The governance is already arriving

Maybe you’re thinking this time is different? Well, already we’re seeing new bureaucratic structures and governance strategies emerge.

Both the US and EU have started producing frameworks for regulation. NIST published SP 800-218A in 2024, creating an entirely new Secure Software Development Framework profile for generative AI. The EU AI Act became partially active in February 2025.

Vendors are building governance into their platforms. GitHub launched enterprise “AI Controls” in mid-2025: audit logs with agent visibility, an “AI manager” custom role, MCP enterprise allowlists, and mandatory code scanning for every agent-generated PR.

Standards bodies are creating entirely new categories. ISO/IEC 42001 is the first global AI management system standard. OWASP created a dedicated LLM Top 10 vulnerability taxonomy and expanded it to include an Agentic AI Security Framework. ISACA launched a new “Advanced in AI Audit credential. The factory inspector role Mk2?

And within the tooling space, new concepts are appearing. Provenance tools for tracking AI-generated changes and linking it to the model. New gating frameworks for CI/CD systems, new practices for storing prompt documentation in PR descriptions. All this best practice is still emerging.

No wonder that Forrester projects the AI governance software market will see 30% CAGR through 2030!

Ashby’s Law and what it means for you

The theoretical frame that ties all of this together is Ashby’s Law of Requisite Variety2. The law states that a control system must have at least as much variety as the system it’s trying to control. If you increase the variety of outputs a system produces (which is precisely what AI-assisted development does), you must develop a governance layer of at least equivalent variety, or you lose control.

It’s happened with printing and spreadsheets, and I believe it’ll happen with code.

I don’t have a neat five-point plan here (or even a one-point plan). But I’ve been thinking about two directions that feel right.

Mechanised trust. When change happens faster than humans can review it, you need systems that produce evidence of safety rather than relying on humans to notice problems. Automated verification, provenance tracking, policy-as-code.

Risk-tiered review. Not all code carries the same risk. A documentation fix and a change to your authentication system are not the same thing and treating them identically is a waste of scarce reviewer attention. Good architecture (separation of concerns, clear boundaries) can make this possible and tiered governance means you can move fast on low-risk changes while applying serious scrutiny where it counts.

If you’re a software engineering leader, I’d pay more attention than ever to understanding how each and every pull request is of an acceptable level of risk. And then think, how would I prove that?

I admit I haven’t reviewed them all but opening with “Some productivity breakthroughs have created more oversight” would be a really boring opener.

I keep referencing this because The Unaccountability Machine introduced me to it. I can’t recommend this book highly enough!

So we are all becoming AI supervisors? What a wonderful world to live in.